· Darren Ong · documentation · 8 min read

Data Engineering Services: What You Get & Why It Matters

Your data is scattered across systems and your team is spending more time fixing pipelines than building features. Here is what professional data engineering services deliver and when to outsource vs build in-house.

Introduction

Your data team is spending 80% of their time fixing broken pipelines and manually moving data between systems. Meanwhile, your product team is waiting weeks for clean data to build new features, and your executives are making decisions on outdated reports.

Sound familiar?

This is the reality for most growing companies. Data engineering is the foundation of everything data-driven, but it is also the most overlooked and underinvested area.

In this guide, I will break down:

- What data engineering services actually deliver

- The true cost of building vs outsourcing

- When to hire in-house vs outsource

- What to expect from a data engineering engagement

- Real client examples with ROI

Let’s dive in.

What is Data Engineering?

Data engineering is the practice of designing, building, and maintaining the infrastructure that makes data usable.

Think of it this way: if data is crude oil, data engineers build the pipelines, refineries, and distribution systems that turn it into usable fuel.

What Data Engineers Actually Do

1. Data Pipeline Development

- Extract data from source systems (APIs, databases, SaaS tools)

- Transform and clean data (remove duplicates, standardize formats)

- Load data into destination systems (data warehouse, data lake)

- Monitor and maintain pipeline health

2. Data Infrastructure Design

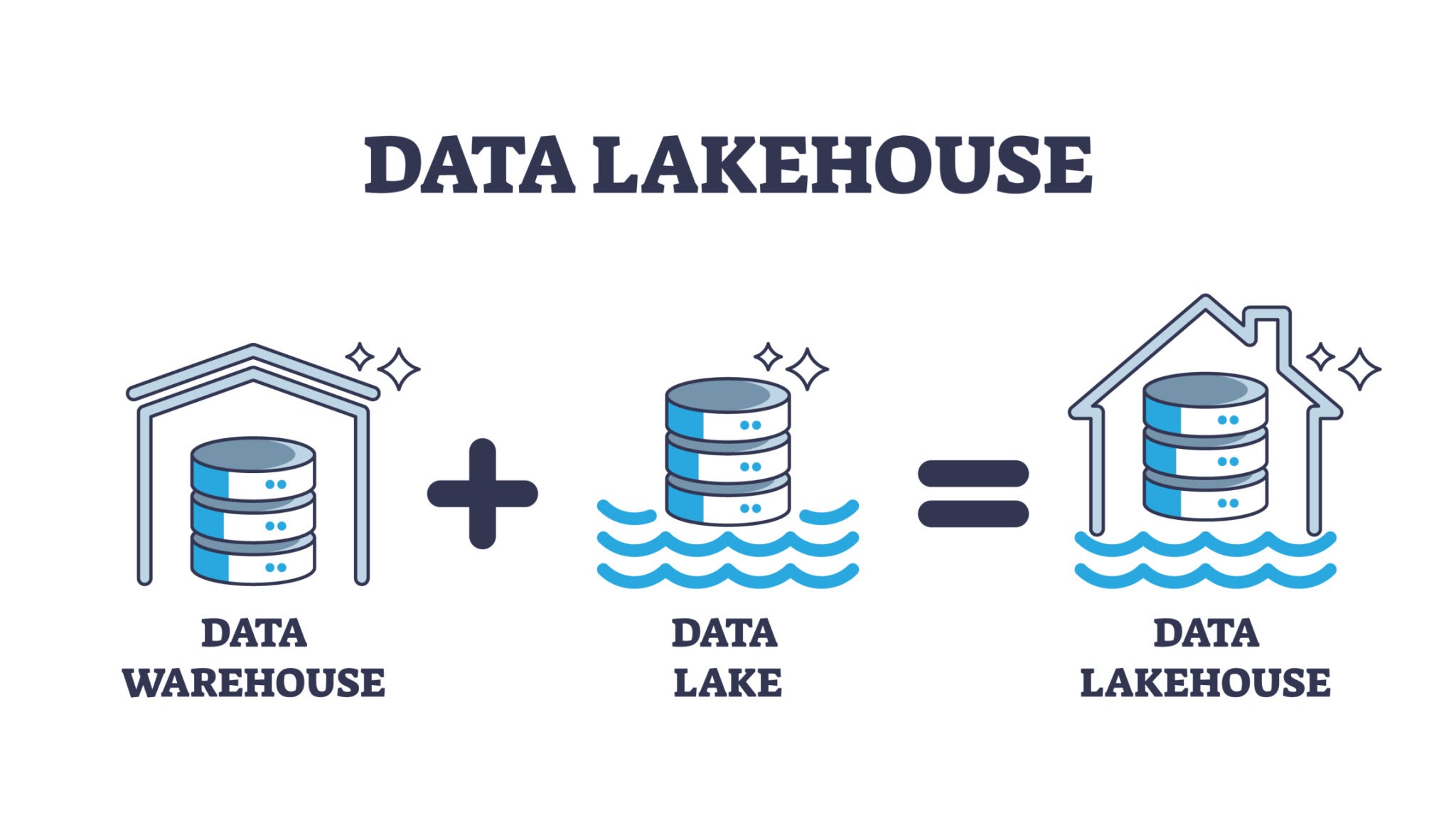

- Choose the right architecture (data warehouse vs data lake vs lakehouse)

- Select technology stack (Snowflake, BigQuery, Redshift, etc.)

- Design for scalability and performance

- Implement security and governance

3. Data Quality & Governance

- Define data quality standards

- Implement data validation rules

- Set up monitoring and alerting

- Document data lineage and schemas

4. Analytics Enablement

- Create clean, modeled datasets for analysts

- Build self-service data models

- Optimize query performance

- Support BI and reporting tools

Common Data Engineering Challenges

Challenge 1: Data Silos

Problem: Data is trapped in separate systems (CRM, ERP, marketing tools, etc.) and cannot be combined for analysis.

Impact:

- Cannot get a unified view of customers

- Reporting is manual and error-prone

- Decisions are made on incomplete data

Solution: Build centralized data platform with automated pipelines from all source systems.

Related: Case Study: Powering Personalization for a Global MNC - How we unified data across subsidiaries for a global MNC.

Challenge 2: Broken or Unreliable Pipelines

Problem: Pipelines fail frequently, data is outdated, and no one knows who is responsible for fixing them.

Impact:

- Executives do not trust the data

- Analysts spend hours debugging instead of analyzing

- Business opportunities are missed due to stale data

Solution: Implement robust pipeline architecture with monitoring, alerting, and clear ownership.

Challenge 3: Scaling Issues

Problem: What worked at $10M revenue breaks at $50M. Queries take hours, pipelines are slow, and costs are exploding.

Impact:

- Cannot support business growth

- Data team is constantly firefighting

- Technology debt is accumulating

Solution: Design scalable architecture from the start (or refactor before it becomes critical).

Related: From Data Overload to Actionable Insights - How executives can simplify data management at scale.

Challenge 4: Skills Gap

Problem: Your team knows SQL and Excel, but lacks expertise in modern data stack (cloud platforms, orchestration tools, etc.).

Impact:

- Cannot adopt new technologies

- Stuck with legacy systems

- Competitors are moving faster

Solution: Outsource to specialists while upskilling internal team.

Related: The Benefits of Outsourcing Data Management - Why outsourcing makes sense for growing companies.

What You Get from Data Engineering Services

Engagement Model 1: Project-Based

Best for: Specific initiatives with defined scope

Typical Deliverables:

- Data warehouse setup (Snowflake, BigQuery, Redshift)

- Core data pipelines (5-10 source systems)

- Data modeling for analytics

- Documentation and training

- Knowledge transfer to internal team

Timeline: 6-12 weeks

Investment: Starting at $75,000 USD (one-time)

Note: Minimum engagement for project-based work. For smaller needs, consider our monthly retainer.

Engagement Model 2: Monthly Retainer

Best for: Ongoing data engineering support

Typical Deliverables:

- Pipeline development and maintenance (50 hours/month)

- Infrastructure optimization

- Data quality monitoring

- Ad-hoc engineering support

- Regular architecture reviews

Timeline: Ongoing (month-to-month)

Investment: $3,500/month (or $3,325/month on yearly plan)

Engagement Model 3: Fractional Data Engineering Lead

Best for: Companies needing technical leadership + execution

Typical Deliverables:

- Data architecture strategy

- Technology stack decisions

- Hands-on pipeline development

- Team mentoring and training

- Vendor management

Timeline: Ongoing (20-40 hours/month)

Investment: $2,000-$3,800/month (fractional CDO tier)

Build In-House vs Outsource: Cost Comparison

In-House Data Engineer (Annual Cost)

| Cost Component | Amount |

|---|---|

| Base Salary (Singapore) | $80,000-$150,000 |

| Base Salary (Malaysia) | $40,000-$80,000 |

| Base Salary (Australia) | $100,000-$180,000 |

| Benefits (20-30%) | $16,000-$54,000 |

| Recruitment Fees | $16,000-$54,000 |

| Equipment + Software | $5,000-$10,000 |

| Total Year 1 | $157,000-$348,000 |

Time to Hire: 3-6 months

Risk: High (permanent hire, may not be the right fit)

Outsourced Data Engineering (Annual Cost)

| Engagement | Monthly | Annual |

|---|---|---|

| Monthly Retainer | $3,500 | $42,000 |

| Yearly Retainer | $3,325 | $39,900 |

| Project-Based | Starting at $75,000 | N/A |

Time to Start: 1-2 weeks

Risk: Low (month-to-month, can scale up/down)

Savings: 70-85% with Outsourcing (Retainer)

Plus:

- Immediate access to senior expertise

- No recruitment time or costs

- Flexibility to scale based on needs

- Access to entire team, not just one person

Project-Based Note: At $75K+, project-based is for enterprise transformations. Best ROI for companies $50M+ revenue with complex, multi-system integrations.

When to Outsource Data Engineering

Good Fit for Outsourcing

Company Stage:

- Revenue: $5M-$200M

- Team: 20-500 employees

- Pre-seed to Series C (or profitable SMB)

Technical Situation:

- No dedicated data engineer yet

- Current engineer is overloaded

- Need specific expertise (cloud migration, real-time pipelines, etc.)

- Want to test data initiatives before committing to full-time hire

Timeline:

- Need results in weeks, not months

- Cannot wait 3-6 months for hiring process

- Have immediate data challenges to solve

Budget:

- Cannot justify $150K+ for full-time engineer

- Have $3K-5K/month budget for data engineering (retainer)

- OR have $75K+ budget for transformation project (project-based)

- Want predictable costs

Related: Fractional CDO Cost Breakdown 2026 - Compare fractional CDO vs outsourced team vs in-house hiring costs.

When Project-Based Makes Sense ($75K+)

Good Fit:

- Revenue: $50M+

- Multiple systems need integration (10+ sources)

- Need complete data platform from scratch

- Have dedicated internal team to hand off to

- Timeline: 8-12 weeks for full build

Examples:

- Data warehouse + 10+ pipelines + data modeling

- Cloud migration (on-prem to AWS/GCP/Azure)

- Real-time streaming infrastructure

- Enterprise data governance implementation

Real Client Examples

Case Study 1: E-commerce Company - Data Infrastructure Setup

Client: Growing e-commerce company ($50M revenue)

Challenge:

- Data scattered across Shopify, NetSuite, marketing platforms

- Manual reporting taking 20+ hours/week

- Cannot track customer lifetime value accurately

Solution:

- Built ELT pipelines from 8 source systems to BigQuery

- Created 35+ automated metrics (operations, marketing, finance)

- Integrated QuickBooks data via custom API pipeline

- Implemented cohort analysis for retention tracking

Results:

- 20 hours/week saved in manual reporting

- 35+ metrics automated and reliable

- QuickBooks data integrated (P&L, invoices, customers)

- Foundation laid for ML initiatives

Investment: $3,500/month (outsourced retainer)

ROI: $52K/year in efficiency gains alone

Related: Supercharging Our Clients’ Data - See how we built the ELT pipelines and Looker Studio dashboards for this client.

Case Study 2: SaaS Startup - Product Analytics Pipeline

Client: B2B SaaS startup ($10M revenue, raising Series B)

Challenge:

- Product usage data not tracked

- Cannot demonstrate unit economics to investors

- Need data room for due diligence

Solution:

- Implemented event tracking (Mixpanel)

- Built product analytics pipeline

- Created investor dashboard (MRR, churn, LTV, CAC)

- Set up data room for due diligence

Results:

- Raised $15M Series B

- Data strategy was key differentiator with investors

- Clear unit economics demonstrated

- Post-funding: Hired full-time data lead

Investment: $8,000 (project-based, 4 weeks)

ROI: $15M raise enabled

Related: The Strategic Data Solution - How fractional CDO leadership combined with execution delivered this result.

Case Study 3: Digital Property Platform - Data Lakehouse

Client: Leading property platform (millions of users)

Challenge:

- Data siloed across Inventory, CRM, Finance systems

- Market analysis taking weeks

- Search UX failing (misspellings = zero results)

Solution:

- Built Data Lakehouse on Snowflake + AWS

- Centralized data from all mission-critical systems

- Created real-time analytics views

- Deployed LLM-powered fuzzy search

Results:

- Market analysis 40% faster

- Search conversion rate increased

- Single source of truth established

- Hundreds of hours saved monthly

Investment: $12,000 (project-based, 8 weeks)

ROI: $52K/year in efficiency + conversion lift

Related: Case Study: Unlocking Market Insights - Full technical deep-dive on this transformation.

What to Look for in a Data Engineering Partner

Questions to Ask

Technical Expertise:

- What cloud platforms are you certified in? (AWS, GCP, Azure)

- What data stack do you recommend for our use case?

- Can you show examples of similar pipelines you have built?

- How do you handle data quality and monitoring?

- What is your approach to documentation and knowledge transfer?

Engagement Model:

- Who will be working on our project?

- How do you communicate progress?

- What happens if we are not satisfied?

- Can we scale up/down during the engagement?

- Do you provide ongoing support after project completion?

Related Reading: Fractional CDO Cost Breakdown 2026 - Understand the leadership layer that oversees data engineering decisions.

Red Flags

- Cannot explain technical decisions in business terms

- No portfolio of similar projects

- Pushes specific technology without understanding your needs

- Vague about deliverables and timeline

- No monitoring or alerting strategy

- Does not include documentation in scope

Green Flags

- Asks detailed questions about your business goals

- Recommends appropriate technology for your stage

- Provides clear scope, timeline, and deliverables

- Includes monitoring, alerting, and documentation

- Focuses on knowledge transfer, not dependency

- Transparent pricing (same worldwide, no hidden fees)

Technology Stack Recommendations

For Startups ($5M-$50M Revenue)

Data Warehouse: Snowflake or BigQuery

ELT Tool: Fivetran or Airbyte

Transformation: dbt (data build tool)

Orchestration: Airflow or Prefect

BI Tool: Looker Studio or Power BI

Why: Low maintenance, scales well, minimal engineering overhead

Related: Data Lake, Data Warehouse, and Data Lakehouse - Understand which architecture fits your stage.

For Growth Companies ($50M-$200M Revenue)

Data Warehouse: Snowflake, BigQuery, or Redshift

Data Lake: AWS S3 or GCP Cloud Storage

ELT Tool: Fivetran, Airbyte, or custom pipelines

Transformation: dbt with modular models

Orchestration: Airflow, Dagster, or Prefect

BI Tool: Looker, Power BI, or Tableau

Why: More control, better performance, supports advanced use cases

For Enterprise ($200M+ Revenue)

Data Lakehouse: Databricks or Snowflake with data lake integration

Real-Time: Kafka or AWS Kinesis for streaming

Data Catalog: Datahub or Amundsen

Governance: Collibra or Alation

Orchestration: Airflow at scale

Why: Enterprise-grade governance, real-time capabilities, supports complex architectures

Related: The Death of the Data Migration Cycle - Why enterprise companies are moving to zero-copy architectures.

Next Steps

Summary:

- Data engineering is the foundation of data-driven decisions

- Outsourcing saves 70-85% vs in-house hire (retainer model)

- Time to value: 1-2 weeks vs 3-6 months for hiring

- Project-based engagements start at $75K for enterprise transformations

- Good fit for companies $5M-$200M revenue

Is outsourced data engineering right for you?

If you are spending more time fixing pipelines than analyzing data, or if you cannot justify $150K+ for a full-time data engineer, outsourced data engineering could be the perfect solution.

What to do next:

Schedule Your Free Consultation →

We will discuss your specific situation, data challenges, and whether outsourced data engineering makes sense for you. No sales pitch, just honest advice.

More Reading:

- Fractional CDO Cost Breakdown 2026 - Leadership layer pricing

- Data Infrastructure ROI Guide - How to justify investment to CFO

- Our Services - Complete overview of all data services

- Case Studies - More client success stories